SleepFM, a foundation model trained on 585,000+ hours of sleep recordings, predicts 130 diseases from a single night's polysomnography with C-Index scores of 0.78–0.85.

SleepFM is a multimodal AI foundation model that analyzes polysomnography sleep recordings to predict future disease risk. Trained on over 585,000 hours of sleep data from approximately 65,000 participants, SleepFM accurately predicts 130 health conditions from a single night of sleep, achieving C-Index scores of 0.84 for all-cause mortality, 0.85 for dementia, and 0.81 for myocardial infarction. This matters because it demonstrates that AI can decode disease risk hidden in the complex physiology of sleep.

Imagine a single night hooked up to a sleep monitor could reveal your risk for heart failure, dementia, or even cancer years before symptoms appear. That's the promise of SleepFM, a multimodal sleep foundation model developed by researchers at Stanford University and collaborating institutions. Building on advances in self-supervised AI and contrastive learning for biosignal analysis, this model treats sleep recordings as a rich, largely untapped source of health intelligence.

Here's the fascinating part: from a single overnight sleep study, SleepFM can flag risk for an extraordinarily wide range of conditions. The researchers paired sleep data from the Stanford Sleep Clinic with electronic health records, mapping diagnostic codes to 1,041 disease phenotypes. Of these, 130 conditions achieved both a C-Index and AUROC of at least 0.75 (with Bonferroni-corrected P < 0.01), meaning the predictions are both accurate and statistically robust.

Let me break down some standout numbers. For all-cause mortality, SleepFM achieved a C-Index of 0.84. For dementia, it reached 0.85. Heart failure came in at 0.80, chronic kidney disease at 0.79, and stroke at 0.78. The model also showed remarkable accuracy for specific neurological conditions: Alzheimer's disease hit a C-Index of 0.91, and Parkinson's disease reached 0.89. For cancer prediction, the model achieved AUROCs of 0.90 for both prostate and breast cancer.

Critically, SleepFM outperformed two supervised baselines — a demographics-only model (using age, sex, race/ethnicity, and BMI) and an end-to-end model trained directly on raw sleep data without pretraining. The AUROC improvement ranged from 5% to 17% depending on the disease category, with the largest gains in neurological and hematopoietic conditions. Even more impressive, SleepFM trained on just 10% of the fine-tuning data outperformed the demographics baseline trained on five times more data across all conditions.

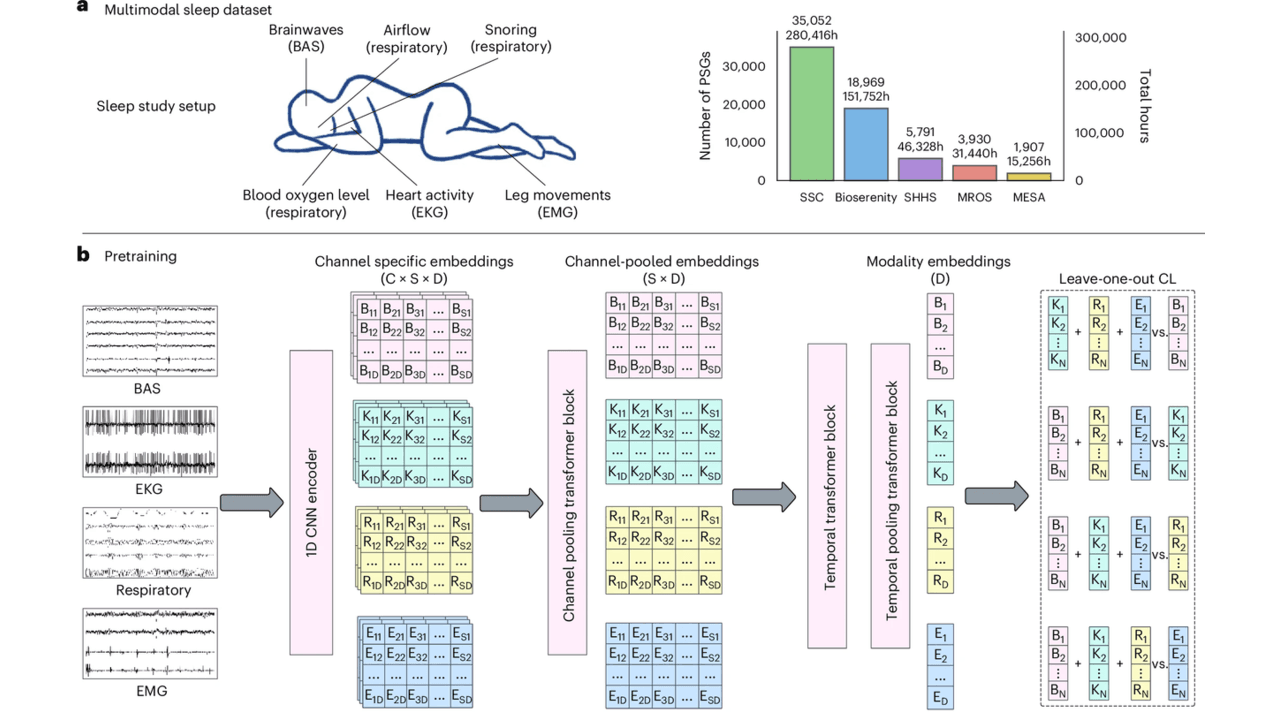

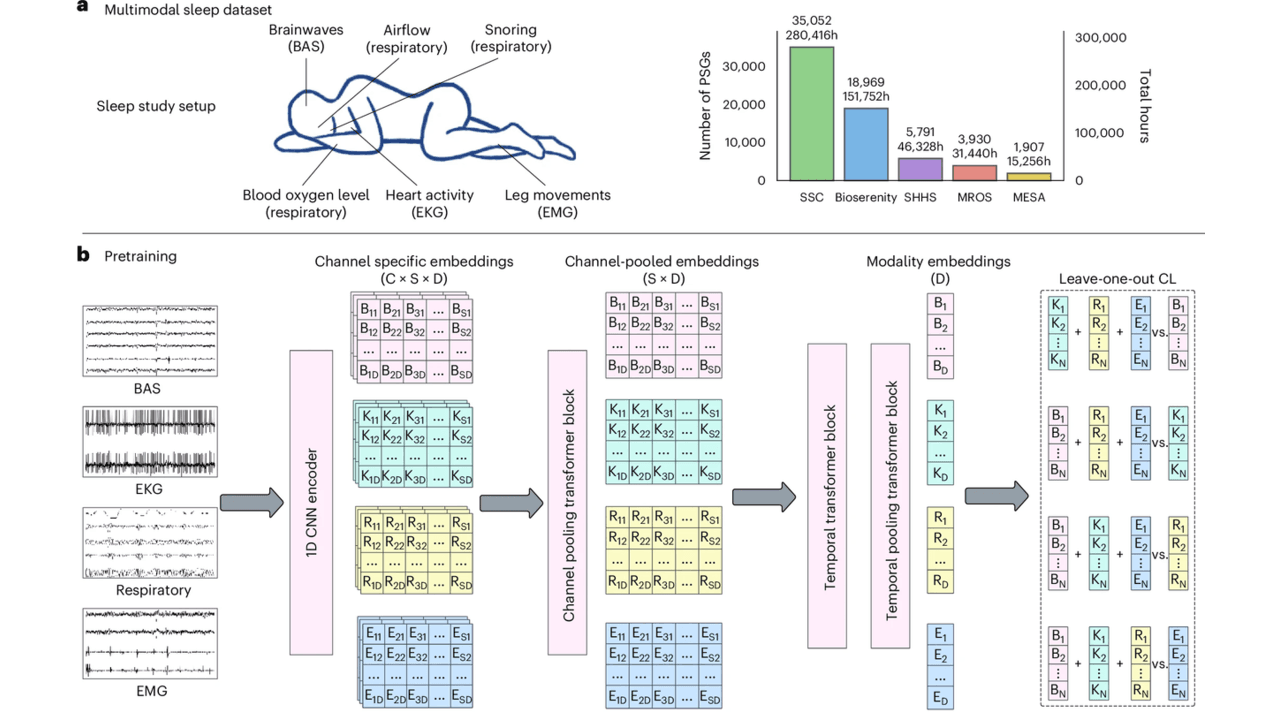

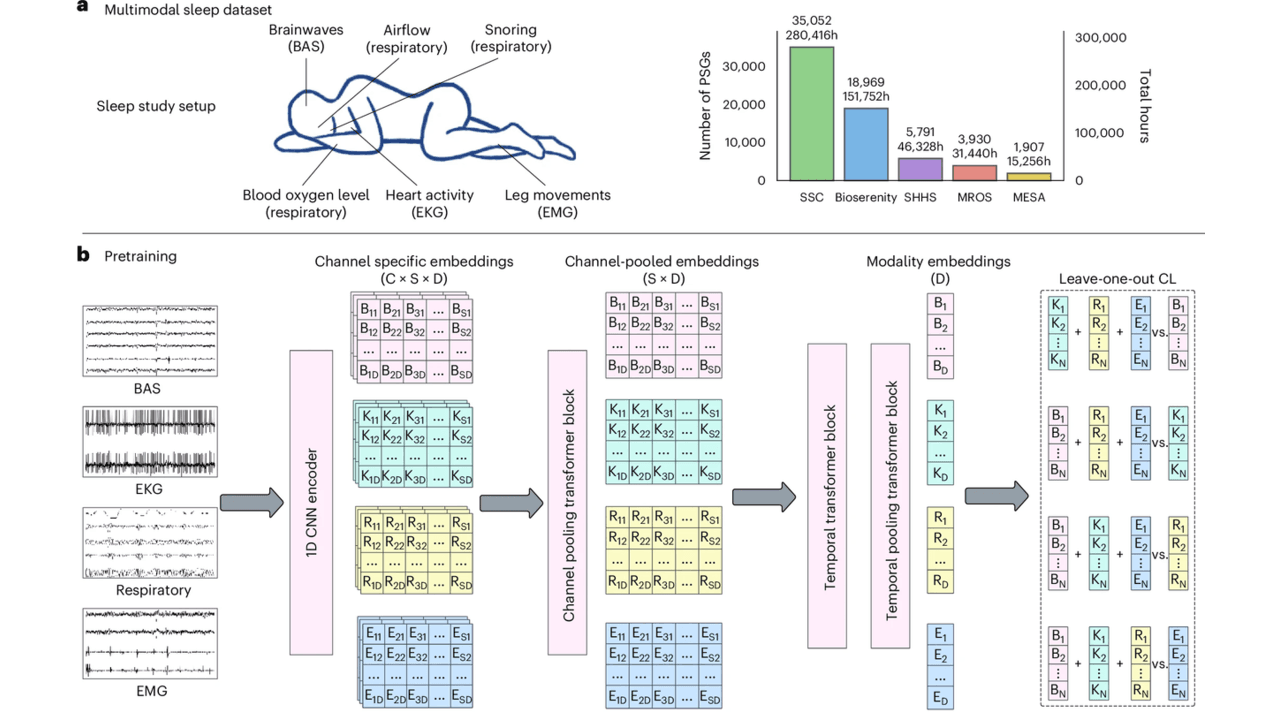

So what's actually under the hood? Polysomnography — the gold standard sleep test — records brain activity (EEG, EOG), heart rhythm (ECG), muscle activity (EMG), and breathing patterns simultaneously. The challenge is that different clinics use different channel configurations, making it hard to build one model that works everywhere.

SleepFM solves this with a channel-agnostic architecture. All signals are resampled to 128 Hz and segmented into 5-second windows. One-dimensional convolutional layers extract features, and a clever attention pooling mechanism handles variable numbers of channels. A transformer block then captures temporal patterns across 5-minute context windows.

The real innovation is the leave-one-out contrastive learning (LOO-CL) algorithm. During pretraining, this approach aligns representations across all signal modalities — brain, heart, respiratory, muscle — so the model learns a unified "language of sleep" even when some channels are missing. Think of it like learning to understand a conversation even when you can only hear some of the speakers.

For disease prediction, the model compresses an entire night's worth of embeddings into a single 128-dimensional vector and applies a multilabel Cox proportional hazards loss — a survival analysis technique that accounts for when diseases develop, not just whether they do.

The clinical implications are substantial. SleepFM demonstrated strong transfer learning on the Sleep Heart Health Study dataset — entirely excluded from pretraining — achieving C-Indices of 0.82 for stroke, 0.85 for congestive heart failure, and 0.88 for cardiovascular disease mortality. It also performed competitively on standard sleep analysis tasks, with F1 scores of 0.70–0.78 for sleep staging and 87% accuracy for sleep apnea detection.

The ablation analyses revealed that different signal types carry different diagnostic weight. Brain activity signals best predicted neurological and mental disorders, respiratory signals captured metabolic and respiratory conditions, and ECG signals were most informative for circulatory diseases. Combining all modalities consistently yielded the best results.

That said, the researchers are transparent about important limitations. The training data comes primarily from patients referred for sleep studies — people already suspected of having sleep disorders. This selection bias means the model may not generalize perfectly to the general population. Performance showed some degradation on temporal test sets (patients from 2020 onward, when training used pre-2020 data), highlighting challenges as clinical practices evolve. The model's deep learning architecture also makes case-level interpretability difficult — we know it works, but understanding exactly which sleep features drive specific predictions remains an open question.

Additionally, only a subset of the 1,041 disease conditions could be validated on the external SHHS dataset due to limited diagnostic overlap. As wearable sleep technologies advance, similar to emerging applications in biosignal AI and remote patient monitoring, models like SleepFM could eventually enable noninvasive, real-time health screening — but significant validation work remains before clinical deployment.

Sleep recordings capture multiple physiological signals simultaneously, including brain waves, heart rhythm, breathing patterns, and muscle activity. SleepFM uses artificial intelligence to detect subtle patterns in these signals that correlate with future disease risk, much like how certain blood test patterns can indicate health problems. The model was trained on 585,000 hours of sleep data paired with medical records, allowing it to learn which sleep characteristics are associated with 130 different health conditions ranging from heart disease to dementia.

While SleepFM shows impressive accuracy in research settings, achieving prediction scores of 0.84 for mortality risk and 0.85 for dementia, it is not yet ready for routine clinical use. The model was trained primarily on patients already referred for sleep studies, which means it may not work as well for the general population. Additionally, the researchers note that understanding exactly which sleep features drive specific predictions remains challenging, and more validation studies are needed before the technology can be deployed in hospitals and clinics.

SleepFM uses a novel contrastive learning approach that allows it to understand multiple types of sleep signals together, even when some data channels are missing. Unlike previous models that required specific sensor configurations or were trained for single diseases, SleepFM can work with different equipment setups and predict 130 conditions simultaneously from one recording. The model outperformed traditional approaches by 5 to 17 percent depending on the disease category, and remarkably, it achieved better results using only 10 percent of training data compared to simpler models using five times more data.

This article has been reviewed by a PhD-qualified expert to ensure scientific accuracy. While AI assists in making complex research accessible, all content is verified for factual correctness before publication.

The AI Hivemind: Why All Chatbots Sound the Same Now

You’ve noticed it too—AI responses are starting to blend together. Here’s why that’s dangerous.

Prompt Repetition Improves Non-Reasoning LLMs Without Added Latency

Repeating the input prompt improves accuracy across Gemini, GPT, Claude, and Deepseek models in 47 out of 70 benchmarks with zero losses and no added latency.

Anthropic's Assistant Axis: How LLM Persona Drift Causes Harmful AI Behavior

Researchers at Anthropic and Oxford identified a linear 'Assistant Axis' in LLM activation space that governs persona stability. Activation capping along this axis reduced harmful responses by nearly 60% without degrading model capabilities.

No comments yet. Be the first to share your thoughts!

Get notified when we publish new articles. No spam, unsubscribe anytime.